A Mathematical Theory of Communication

"A Mathematical Theory of Communication" is an article by mathematician Claude E. Shannon published in Bell System Technical Journal in 1948.[1][2][3][4] It was renamed The Mathematical Theory of Communication in the 1949 book of the same name,[5] a small but significant title change after realizing the generality of this work. It became one of the most cited scientific articles and gave rise to the field of information theory.[6]

Publication[]

The article was the founding work of the field of information theory. It was later published in 1949 as a book titled The Mathematical Theory of Communication (ISBN 0-252-72546-8), which was published as a paperback in 1963 (ISBN 0-252-72548-4). The book contains an additional article by Warren Weaver, providing an overview of the theory for a more general audience.

Contents[]

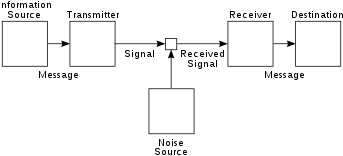

Shannon's article laid out the basic elements of communication:

- An information source that produces a message

- A transmitter that operates on the message to create a signal which can be sent through a channel

- A channel, which is the medium over which the signal, carrying the information that composes the message, is sent

- A receiver, which transforms the signal back into the message intended for delivery

- A destination, which can be a person or a machine, for whom or which the message is intended

It also developed the concepts of information entropy and redundancy, and introduced the term bit (which Shannon credited to John Tukey) as a unit of information. It was also in this paper that the Shannon–Fano coding technique was proposed – a technique developed in conjunction with Robert Fano.

References[]

- ^ Shannon, Claude Elwood (July 1948). "A Mathematical Theory of Communication" (PDF). Bell System Technical Journal. 27 (3): 379–423. doi:10.1002/j.1538-7305.1948.tb01338.x. hdl:11858/00-001M-0000-002C-4314-2. Archived from the original (PDF) on 1998-07-15.

The choice of a logarithmic base corresponds to the choice of a unit for measuring information. If the base 2 is used the resulting units may be called binary digits, or more briefly bits, a word suggested by J. W. Tukey.

- ^ Shannon, Claude Elwood (October 1948). "A Mathematical Theory of Communication". Bell System Technical Journal. 27 (4): 623–666. doi:10.1002/j.1538-7305.1948.tb00917.x. hdl:11858/00-001M-0000-002C-4314-2.

- ^ Ash, Robert B. (1966). Information Theory: Tracts in Pure & Applied Mathematics. New York: John Wiley & Sons Inc. ISBN 0-470-03445-9.

- ^ Yeung, Raymond W. (2008). "The Science of Information". Information Theory and Network Coding. Springer. pp. 1–4. doi:10.1007/978-0-387-79234-7_1. ISBN 978-0-387-79233-0.

- ^ Shannon, Claude Elwood; Weaver, Warren (1949). The Mathematical Theory of Communication (PDF). University of Illinois Press. ISBN 0-252-72548-4. Archived from the original (PDF) on 1998-07-15.

- ^ Van Noorden, Richard; Maher, Brendan; Nuzzo, Regina (2014). "The top 100 papers". Nature. 514 (7524): 550–553. doi:10.1038/514550a. PMID 25355343. S2CID 4466906.

External links[]

- 1963 non-fiction books

- Information theory

- Computer science books

- Mathematics books

- Mathematics papers

- Works originally published in American magazines

- 1948 documents

- Works originally published in science and technology magazines

- Texts related to the history of the Internet

- Claude Shannon