Collective action problem

A collective action problem or social dilemma is a situation in which all individuals would be better off cooperating but fail to do so because of conflicting interests between individuals that discourage joint action.[1][2][3] The collective action problem has been addressed in political philosophy for centuries, but was most clearly established in 1965 in Mancur Olson's The Logic of Collective Action.

Problems arise when too many group members choose to pursue individual profit and immediate satisfaction rather than behave in the group's best long-term interests. Social dilemmas can take many forms and are studied across disciplines such as psychology, economics, and political science. Examples of phenomena that can be explained using social dilemmas include resource depletion, low voter turnout, and overpopulation. The collective action problem can be understood through the analysis of game theory and the free-rider problem, which results from the provision of public goods. Additionally, the collective problem can be applied to numerous public policy concerns that countries across the world currently face.

Prominent theorists[]

Early thought[]

Although he never used the words "collective action problem", Thomas Hobbes was an early philosopher on the topic of human cooperation. Hobbes believed that people act purely out of self-interest, writing in Leviathan in 1651 that "if any two men desire the same thing, which nevertheless they cannot both enjoy, they become enemies."[4] Hobbes believed that the state of nature consists of a perpetual war between people with conflicting interests, causing people to quarrel and seek personal power even in situations where cooperation would be mutually beneficial for both parties. Through his interpretation of humans in the state of nature as selfish and quick to engage in conflict, Hobbes's philosophy laid the foundation for what is now referred to as the collective action problem.

David Hume provided another early and better-known interpretation of what is now called the collective action problem in his 1738 book A Treatise of Human Nature. Hume characterizes a collective action problem through his depiction of neighbors agreeing to drain a meadow:

Two neighbours may agree to drain a meadow, which they possess in common; because it is easy for them to know each others mind; and each must perceive, that the immediate consequence of his failing in his part, is, the abandoning the whole project. But it is very difficult, and indeed impossible, that a thousand persons should agree in any such action; it being difficult for them to concert so complicated a design, and still more difficult for them to execute it; while each seeks a pretext to free himself of the trouble and expence, and would lay the whole burden on others.[5]

In this passage, Hume establishes the basis for the collective action problem. In a situation in which a thousand people are expected to work together to achieve a common goal, individuals will be likely to free ride, as they assume that each of the other members of the team will put in enough effort to achieve said goal. In smaller groups, the impact one individual has is much greater, so individuals will be less inclined to free ride.

Modern thought[]

The most prominent modern interpretation of the collective action problem can be found in Mancur Olson's 1965 book The Logic of Collective Action.[6] In it, he addressed the accepted belief at the time by sociologists and political scientists that groups were necessary to further the interests of their members. Olson argued that individual rationality does not necessarily result in group rationality, as members of a group may have conflicting interests that do not represent the best interests of the overall group.

Olson further argued that in the case of a pure public good that is both nonrival and nonexcludable, one contributor tends to reduce their contribution to the public good as others contribute more. Additionally, Olson emphasized the tendency of individuals to pursue economic interests that would be beneficial to themselves and not necessarily the overall public. This contrasts with Adam Smith's theory of the "invisible hand" of the market, where individuals pursuing their own interests should theoretically result in the collective well-being of the overall market.[6]

Olson's book established the collective action problem as one of the most troubling dilemmas in social science, leaving a profound impression on present-day discussions of human behavior and its relationship with governmental policy.

Theories[]

Game theory[]

Social dilemmas have attracted a great deal of interest in the social and behavioral sciences. Economists, biologists, psychologists, sociologists, and political scientists alike study behavior in social dilemmas. The most influential theoretical approach is economic game theory (i.e., rational choice theory, expected utility theory). Game theory assumes that individuals are rational actors motivated to maximize their utilities. Utility is often narrowly defined in terms of people's economic self-interest. Game theory thus predicts a non-cooperative outcome in a social dilemma. Although this is a useful starting premise there are many circumstances in which people may deviate from individual rationality, demonstrating the limitations of economic game theory.[7]

Game theory is one of the principal components of economic theory. It addresses the way individuals allocate scarce resources and how scarcity drives human interaction.[8] One of the most famous examples of game theory is the prisoner's dilemma. The classical prisoner's dilemma model consists of two players who are accused of a crime. If Player A decides to betray Player B, Player A will receive no prison time while Player B receives a substantial prison sentence, and vice versa. If both players choose to keep quiet about the crime, they will both receive reduced prison sentences, and if both players turn the other in, they will each receive more substantial sentences. It would appear in this situation that each player should choose to stay quiet so that both will receive reduced sentences. In actuality, however, players who are unable to communicate will both choose to betray each other, as they each have an individual incentive to do so in order to receive a commuted sentence.[9]

Prisoner's dilemma[]

The prisoner's dilemma model is crucial to understanding the collective problem because it illustrates the consequences of individual interests that conflict with the interests of the group. In simple models such as this one, the problem would have been solved had the two prisoners been able to communicate. In more complex real world situations involving numerous individuals, however, the collective action problem often prevents groups from making decisions that are of collective economic interest.[10]

The prisoner's dilemma is a simple game[11] that serves as the basis for research on social dilemmas.[12] The premise of the game is that two partners in crime are imprisoned separately and each are offered leniency if they provide evidence against the other. As seen in the table below, the optimal individual outcome is to testify against the other without being testified against. However, the optimal group outcome is for the two prisoners to cooperate with each other.

| Prisoner B does not confess (cooperates) | Prisoner B confesses (defects) | |

|---|---|---|

| Prisoner A does not confess (cooperates) | Each serves 1 year | Prisoner A: 3 years Prisoner B: goes free |

| Prisoner A confesses (defects) | Prisoner A: goes free Prisoner B: 3 years |

Each serves 2 years |

In iterated games, players may learn to trust one another, or develop strategies like tit-for-tat, cooperating unless the opponent has defected in the previous round.

Asymmetric prisoner's dilemma games are those in which one prisoner has more to gain and/or lose than the other.[13] In iterated experiments with unequal rewards for co-operation, a goal of maximizing benefit may be overruled by a goal of equalizing benefit. The disadvantaged player may defect a certain proportion of the time without it being in the interest of the advantaged player to defect.[14] In more natural circumstances, there may be better solutions to the bargaining problem.

Related games include the Snowdrift game, Stag hunt, the Unscrupulous diner's dilemma, and the Centipede game.

Evolutionary theories[]

Biological and evolutionary approaches provide useful complementary insights into decision-making in social dilemmas. According to selfish gene theory, individuals may pursue a seemingly irrational strategy to cooperate if it benefits the survival of their genes. The concept of inclusive fitness delineates that cooperating with family members might pay because of shared genetic interests. It might be profitable for a parent to help their off-spring because doing so facilitates the survival of their genes. Reciprocity theories provide a different account of the evolution of cooperation. In repeated social dilemma games between the same individuals, cooperation might emerge because participants can punish a partner for failing to cooperate. This encourages reciprocal cooperation. Reciprocity serves as an explanation for why participants cooperate in dyads, but fails to account for larger groups. Evolutionary theories of indirect reciprocity and costly signaling may be useful to explain large-scale cooperation. When people can selectively choose partners to play games with, it pays to develop a cooperative reputation. Cooperation communicates kindness and generosity, which combine to make someone an attractive group member.

Psychological theories[]

Psychological models offer additional insights into social dilemmas by questioning the game theory assumption that individuals are confined to their narrow self-interest. Interdependence Theory suggests that people transform a given pay-off matrix into an effective matrix that is more consistent with their social dilemma preferences. A prisoner's dilemma with close kin, for example, changes the pay-off matrix into one in which it is rational to be cooperative. Attribution models offer further support for these transformations. Whether individuals approach a social dilemma selfishly or cooperatively might depend upon whether they believe people are naturally greedy or cooperative. Similarly, assumes that people might cooperate under two conditions: They must (1) have a cooperative goal, and (2) expect others to cooperate. Another psychological model, the appropriateness model, questions the game theory assumption that individuals rationally calculate their pay-offs. Instead many people base their decisions on what people around them do and use simple heuristics, like an equality rule, to decide whether or not to cooperate. The logic of appropriateness suggests that people ask themselves the question: "what does a person like me (identity) do (rules/heuristics) in a situation like this (recognition) given this culture (group)?" (Weber et al., 2004) [15] (Kopelman 2009)[16] and that these factors influence cooperation.

Public goods[]

A public goods dilemma is a situation in which the whole group can benefit if some of the members give something for the common good but individuals benefit from “free riding” if enough others contribute.[17] Public goods are defined by two characteristics: non-excludability and non-rivalry—meaning that anyone can benefit from them and one person's use of them does not hinder another person's use of them. An example is public broadcasting that relies on contributions from viewers. Since no single viewer is essential for providing the service, viewers can reap the benefits of the service without paying anything for it. If not enough people contribute, the service cannot be provided. In economics, the literature around public goods dilemmas refers to the phenomenon as the free rider problem. The economic approach is broadly applicable and can refer to the free-riding that accompanies any sort of public good.[18] In social psychology, the literature refers to this phenomenon as social loafing. Whereas free-riding is generally used to describe public goods, social loafing refers specifically to the tendency for people to exert less effort when in a group than when working alone.[19]

Public goods are goods that are nonrival and nonexcludable. A good is said to be nonrival if its consumption by one consumer does not in any way impact its consumption by another consumer. Additionally, a good is said to be nonexcludable if those who do not pay for the good cannot be kept from enjoying the benefits of the good.[20] The nonexcludability aspect of public goods is where one facet of the collective action problem, known as the free-rider problem, comes into play. For instance, a company could put on a fireworks display and charge an admittance price of $10, but if community members could all view the fireworks display from their homes, most would choose not to pay the admittance fee. Thus, the majority of individuals would choose to free ride, discouraging the company from putting on another fireworks show in the future. Even though the fireworks display was surely beneficial to each of the individuals, they relied on those paying the admittance fee to finance the show. If everybody had assumed this position, however, the company putting on the show would not have been able to procure the funds necessary to buy the fireworks that provided enjoyment for so many individuals. This situation is indicative of a collective action problem because the individual incentive to free ride conflicts with the collective desire of the group to pay for a fireworks show for all to enjoy.[20]

Pure public goods include services such as national defense and public parks that are usually provided by governments using taxpayer funds.[20] In return for their tax contribution, taxpayers enjoy the benefits of these public goods. In developing countries where funding for public projects is scarce, however, it often falls on communities to compete for resources and finance projects that benefit the collective group.[21] The ability of communities to successfully contribute to public welfare depends on the size of the group, the power or influence of group members, the tastes and preferences of individuals within the group, and the distribution of benefits among group members. When a group is too large or the benefits of collective action are not tangible to individual members, the collective action problem results in a lack of cooperation that makes the provision of public goods difficult.[21]

Replenishing resource management[]

A replenishing resource management dilemma is a situation in which group members share a renewable resource that will continue to produce benefits if group members do not over harvest it but in which any single individual profits from harvesting as much as possible.[22]

Tragedy of the commons[]

The tragedy of the commons is a type of replenishing resource management dilemma. The dilemma arises when members of a group share a common good. A common good is rivalrous and non-excludable, meaning that anyone can use the resource but there is a finite amount of the resource available and it is therefore prone to overexploitation.[24]

The paradigm of the tragedy of the commons first appeared in an 1833 pamphlet by English economist William Forster Lloyd. According to Lloyd, "If a person puts more cattle into his own field, the amount of the subsistence which they consume is all deducted from that which was at the command, of his original stock; and if, before, there was no more than a sufficiency of pasture, he reaps no benefit from the additional cattle, what is gained in one way being lost in another. But if he puts more cattle on a common, the food which they consume forms a deduction which is shared between all the cattle, as well that of others as his own, in proportion to their number, and only a small part of it is taken from his own cattle".[25]

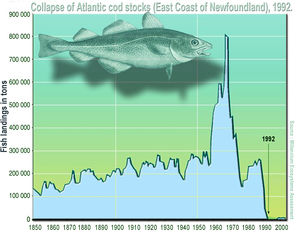

The template of the tragedy of the commons can be used to understand myriad problems, including various forms of resource depletion. For example, overfishing in the 1960s and 1970s led to depletion of the previously abundant supply of Atlantic Cod. By 1992, the population of cod had completely collapsed because fishers had not left enough fish to repopulate the species.[23] Another example is the higher rates of COVID-19 cases of sickness and deaths in individualistic (vs. collectivists) countries.[26]

Social traps[]

A social trap occurs when individuals or groups pursue immediate rewards that later prove to have negative or even lethal consequences.[27] This type of dilemma arises when a behavior produces rewards initially but continuing the same behavior produces diminishing returns. Stimuli that cause social traps are called sliding reinforcers, since they reinforce the behavior in small doses and punish it in large doses.

An example of a social trap is the use of vehicles and the resulting pollution. Viewed individually, vehicles are an adaptive technology that have revolutionized transportation and greatly improved quality of life. But their current widespread use produces high levels of pollution, directly from their energy source or over their lifespan.

Perceptual dilemma[]

A perceptual dilemma arises during conflict and is a product of outgroup bias. In this dilemma, the parties to the conflict prefer cooperation while simultaneously believing that the other side would take advantage of conciliatory gestures.[28]

In conflict[]

The prevalence of perceptual dilemmas in conflict has led to the development of two distinct schools of thought on the subject. According to deterrence theory, the best strategy to take in conflict is to show signs of strength and willingness to use force if necessary. This approach is intended to dissuade attacks before they happen. Conversely, the conflict spiral view holds that deterrence strategies increase hostilities and defensiveness and that a clear demonstration of peaceful intentions is the most effective way to avoid escalation.[29]

An example of the deterrence theory in practice is the Cold War strategy (employed by both the United States and the Soviet Union) of mutually assured destruction (MAD). Because both countries had second strike capability, each side knew that the use of nuclear weapons would result in their own destruction. While controversial, MAD succeeded in its primary purpose of preventing nuclear war and kept the Cold War cold.

Conciliatory gestures have also been used to great effect, in keeping with conflict spiral theory. For example, Egyptian President Anwar El Sadat's 1977 visit to Israel during a prolonged period of hostilities between the two countries was well-received and ultimately contributed in the Egypt–Israel Peace Treaty.

In politics[]

Voting[]

Scholars estimate that, even in a battleground state, there is only a one in ten million chance that one vote could sway the outcome of a United States presidential election.[30] This statistic may discourage individuals from exercising their democratic right to vote, as they believe they could not possibly affect the results of an election. If everybody adopted this view and decided not to vote, however, democracy would collapse. This situation results in a collective action problem, as any single individual is incentivized to choose to stay home from the polls since their vote is very unlikely to make a real difference in the outcome of an election.

Despite high levels of political apathy in the United States, however, this collective action problem does not decrease voter turnout as much as some political scientists might expect.[31] It turns out that most Americans believe their political efficacy to be higher than it actually is, stopping millions of Americans from believing their vote does not matter and staying home from the polls. Thus, it appears collective action problems can be resolved not just by tangible benefits to individuals participating in group action, but by a mere belief that collective action will also lead to individual benefits.

Environmental policy[]

Environmental problems such as climate change, biodiversity loss, and waste accumulation can be described as collective action problems.[32] Since these issues are connected to the everyday actions of vast numbers of people, vast numbers of people are also required to mitigate the effects of these environmental problems. Without governmental regulation, however, individual people or businesses are unlikely to take the actions necessary to reduce carbon emissions or cut back on usage of non-renewable resources, as these people and businesses are incentivized to choose the easier and cheaper option, which often differs from the environmentally-friendly option that would benefit the health of the planet.[32]

Individual self interest has led to over half of Americans believing that government regulation of businesses does more harm than good. Yet, when the same Americans are asked about specific regulations such as standards for food and water quality, most are satisfied with the laws currently in place or favor even more stringent regulations.[33] This illustrates the way the collective problem hinders group action on environmental issues: when an individual is directly affected by an issue such as food and water quality, they will favor regulations, but when an individual cannot see a great impact from their personal carbon emissions or waste accumulation, they will generally tend to disagree with laws that encourage them to cut back on environmentally-harmful activities.

Factors promoting cooperation in social dilemmas[]

Studying the conditions under which people cooperate can shed light on how to resolve social dilemmas. The literature distinguishes between three broad classes of solutions—motivational, strategic, and structural—which vary in whether they see actors as motivated purely by self-interest and in whether they change the rules of the social dilemma game.

Motivational solutions[]

Motivational solutions assume that people have other-regarding preferences. There is a considerable literature on social value orientations which shows that people have stable preferences for how much they value outcomes for self versus others. Research has concentrated on three social motives: (1) individualism—maximizing own outcomes regardless of others; (2) competition—maximizing own outcomes relative to others; and (3) cooperation—maximizing joint outcomes. The first two orientations are referred to as proself orientations and the third as a prosocial orientation. There is much support for the idea that prosocial and proself individuals behave differently when confronted with a social dilemma in the laboratory as well as the field.[citation needed] People with prosocial orientations weigh the moral implications of their decisions more and see cooperation as the most preferable choice in a social dilemma. When there are conditions of scarcity, like a water shortage, prosocials harvest less from a common resource. Similarly prosocials are more concerned about the environmental consequences of, for example, taking the car or public transport.[34]

Research on the development of social value orientations suggest an influence of factors like family history (prosocials have more sibling sisters), age (older people are more prosocial), culture (more individualists in Western cultures), gender (more women are prosocial), even university course (economics students are less prosocial). However, until we know more about the psychological mechanisms underlying these social value orientations we lack a good basis for interventions.

Another factor that might affect the weight individuals assign to group outcomes is the possibility of communication. A robust finding in the social dilemma literature is that cooperation increases when people are given a chance to talk to each other. It has been quite a challenge to explain this effect. One motivational reason is that communication reinforces a sense of group identity.[35]

However, there may be strategic considerations as well. First, communication gives group members a chance to make promises and explicit commitments about what they will do. It is not clear if many people stick to their promises to cooperate. Similarly, through communication people are able to gather information about what others do. On the other hand, this information might produce ambiguous results; an awareness of other people's willingness to cooperate may cause a temptation to take advantage of them.

Social dilemma theory was applied to study social media communication and knowledge sharing in organizations. Organizational knowledge can be considered a public good where motivation to contribute is key. Both intrinsic and extrinsic motivation are important at individual level and can be addressed through managerial interventions.[36]

Strategic solutions[]

A second category of solutions are primarily strategic. In repeated interactions cooperation might emerge when people adopt a Tit for tat strategy (TFT). TFT is characterized by first making a cooperative move while the next move mimics the decision of the partner. Thus, if a partner does not cooperate, you copy this move until your partner starts to cooperate. Computer tournaments in which different strategies were pitted against each other showed TFT to be the most successful strategy in social dilemmas. TFT is a common strategy in real-world social dilemmas because it is nice but firm. Consider, for instance, that marriage contracts, rental agreements, and international trade policies all use TFT-tactics.

However, TFT is quite an unforgiving strategy and in noisy real-world dilemmas a more forgiving strategy has its own advantages. Such a strategy is known as Generous-tit-for-tat (GTFT).[37] This strategy always reciprocates cooperation with cooperation, and usually replies to defection with defection. However, with some probability GTFT will forgive a defection by the other player and cooperate. In a world of errors in action and perception, such a strategy can be a Nash equilibrium and evolutionarily stable. The more beneficial cooperation is, the more forgiving GTFT can be while still resisting invasion by defectors.

Even when partners might not meet again it could be strategically wise to cooperate. When people can selectively choose whom to interact with it might pay to be seen as a cooperator. Research shows that cooperators create better opportunities for themselves than non-cooperators: They are selectively preferred as collaborative partners, romantic partners, and group leaders. This only occurs however when people's social dilemma choices are monitored by others. Public acts of altruism and cooperation like charity giving, philanthropy, and bystander intervention are probably manifestations of reputation-based cooperation.

Structural solutions[]

Structural solutions change the rules of the game either through modifying the social dilemma or removing the dilemma altogether. Field research on conservation behaviour has shown that selective incentives in the form of monetary rewards are effective in decreasing domestic water and electricity use.[citation needed] Furthermore, numerous experimental and case studies show that cooperation is more likely based on a number of factors, including whether or not individuals have the ability to monitor the situation, to punish or "sanction" defectors, if they are legitimized by external political structures to cooperate and self-organize, can communicate with one another and share information, know one another, have effective arenas for conflict resolution, and are managing social and ecological systems that have well-defined boundaries or are easily monitorable.[38][39] Yet implementation of reward and punishment systems can be problematic for various reasons. First, there are significant costs associated with creating and administering sanction systems. Providing selective rewards and punishments requires support institutions to monitor the activities of both cooperators and non-cooperators, which can be quite expensive to maintain. Second, these systems are themselves public goods because one can enjoy the benefits of a sanctioning system without contribution to its existence. The police, army, and judicial system will fail to operate unless people are willing to pay taxes to support them. This raises the question if many people want to contribute to these institutions. Experimental research suggests that particularly low trust individuals are willing to invest money in punishment systems.[40] A considerable portion of people are quite willing to punish non-cooperators even if they personally do not profit. Some researchers even suggest that altruistic punishment is an evolved mechanism for human cooperation. A third limitation is that punishment and reward systems might undermine people's voluntary cooperative intention. Some people get a "warm glow" from cooperation and the provision of selective incentives might crowd out their cooperative intention. Similarly the presence of a negative sanctioning system might undermine voluntary cooperation. Some research has found that punishment systems decrease the trust that people have in others.[41] Other research has found that graduated sanctions, where initial punishments have low severity, make allowances for unusual hardships, and allow the violator to reenter the trust of the collective, have been found to support collective resource management and increase trust in the system.,[42][43]

Boundary structural solutions modify the social dilemma structure and such strategies are often very effective. Experimental studies on commons dilemmas show that overharvesting groups are more willing to appoint a leader to look after the common resource. There is a preference for a democratically elected prototypical leader with limited power especially when people's group ties are strong.[44] When ties are weak, groups prefer a stronger leader with a coercive power base. The question remains whether authorities can be trusted in governing social dilemmas and field research shows that legitimacy and fair procedures are extremely important in citizen's willingness to accept authorities. Other research emphasizes a greater motivation for groups to successfully self-organize, without the need for an external authority base, when they do place a high value on the resources in question but, again, before the resources are severely overharvested. An external "authority" is not presumed to be the solution in these cases, however effective self-organization and collective governance and care for the resource base is.[45]

Another structural solution is reducing group size. Cooperation generally declines when group size increases. In larger groups people often feel less responsible for the common good and believe, rightly or wrongly, that their contribution does not matter. Reducing the scale—for example through dividing a large scale dilemma into smaller more manageable parts—might be an effective tool in raising cooperation. Additional research on governance shows that group size has a curvilinear effect, since at low numbers, governance groups may also not have the person-power to effectively research, manage, and administer the resource system or the governance process.[45]

Another proposed boundary solution is to remove the social from the dilemma, by means of privatization. This restructuring of incentives would remove the temptation to place individual needs above group needs. However, it is not easy to privatize moveable resources such as fish, water, and clean air. Privatization also raises concerns about social justice as not everyone may be able to get an equal share. Privatization might also erode people's intrinsic motivation to cooperate, by externalizing the locus of control.

In society, social units which face a social dilemma within are typically embedded in interaction with other groups, often competition for resources of different kinds. Once this is modeled the social dilemma is strongly attenuated.[46]

There are many additional structural solutions which modify the social dilemma, both from the inside and from the outside. The likelihood of successfully co-managing a shared resource, successfully organizing to self-govern, or successfully cooperating in a social dilemma depends on many variables, from the nature of the resource system, to the nature of the social system the actors are a part of, to the political position of external authorities, to the ability to communicate effectively, to the rules-in-place regarding the management of the commons.[47] However, sub-optimal or failed results in a social dilemma (and perhaps the need for privatization or an external authority) tend to occur "when resource users do not know who all is involved, do not have a foundation of trust and reciprocity, cannot communicate, have no established rules, and lack effective monitoring and sanctioning mechanisms."[48]

Conclusions[]

Close examination reveals that social dilemmas underlie many of the most pressing global issues, from climate change to conflict escalation. Their widespread importance warrants widespread understanding of the main types of dilemmas and accompanying paradigms. Fortunately, the literature on the subject is expanding to accommodate the pressing need to understand social dilemmas as the basis for real-world problems.

Research in this area is applied to areas such as organizational welfare, public health, local and global environmental change. The emphasis is shifting from pure laboratory research towards research testing combinations of motivational, strategic, and structural solutions. It is encouraging that researchers from various behavioral sciences are developing unifying theoretical frameworks to study social dilemmas (like evolutionary theory; or the Social-Ecological Systems framework developed by Elinor Ostrom and her colleagues). For instance, there is a burgeoning neuroeconomics literature studying brain correlates of decision-making in social dilemmas with neuroscience methods. The interdisciplinary nature of the study of social dilemmas does not fit into the conventional distinctions between fields, and demands a multidisciplinary approach that transcends divisions between economics, political science, and psychology.

See also[]

- Collective action

- Coordination game

- Decision theory

- Elinor Ostrom

- Game theory

- Identity politics

- Moral economy

- Nash equilibrium

- Non-zero-sum

- Prisoner's dilemma

- Rationality

- Social trap

- Strategic games

- Superimposed Schedules of Reinforcement

- Tragedy of the anticommons

- Tragedy of the commons

- Voting paradox

- Wicked problem

- Zero-sum game

References[]

- ^ Brown, Garrett; McLean, Iain; McMillan, Alistair, eds. (2018-01-18). "Collective action problem". Collective action problem - Oxford Reference. 1. Oxford University Press. doi:10.1093/acref/9780199670840.001.0001. ISBN 9780199670840. Retrieved 2018-04-11.

- ^ Erhard Friedberg, "Conflict of Interest from the Perspective of the Sociology of Organized Action" in Conflict of Interest in Global, Public and Corporate Governance, Anne Peters & Lukas Handschin (eds), Cambridge University Press, 2012

- ^ Allison, S. T.; Beggan, J. K.; Midgley, E. H. (1996). "The quest for "similar instances" and "simultaneous possibilities": Metaphors in social dilemma research". Journal of Personality and Social Psychology. 71 (3): 479–497. doi:10.1037/0022-3514.71.3.479.

- ^ Hobbes, Thomas. Leviathan.

- ^ Hume, David. A Treatise of Human Nature.

- ^ Jump up to: a b Sandler, Todd (2015-09-01). "Collective action: fifty years later". Public Choice. 164 (3–4): 195–216. doi:10.1007/s11127-015-0252-0. ISSN 0048-5829.

- ^ Rapoport, A. (1962). The use and misuse of game theory. Scientific American, 207(6), 108–119. http://www.jstor.org/stable/24936389

- ^ "What is Game Theory?". levine.sscnet.ucla.edu. Archived from the original on 2018-04-16. Retrieved 2018-04-18.

- ^ "Game theory II: Prisoner's dilemma | Policonomics". policonomics.com. Retrieved 2018-04-18.

- ^ "The Collective Action Problem | GEOG 30N: Geographic Perspectives on Sustainability and Human-Environment Systems, 2011". www.e-education.psu.edu. Retrieved 2018-04-18.

- ^ Rapoport, A., & Chammah, A. M. (1965). Prisoner’s Dilemma: A study of conflict and cooperation. Ann Arbor, MI: University of Michigan Press.

- ^ Van Vugt, M., & Van Lange, P. A. M. (2006). Psychological adaptations for prosocial behavior: The altruism puzzle. In M. Schaller, J. A. Simpson, & D. T. Kenrick (Eds.), Evolution and Social Psychology (pp. 237–261). New York: Psychology Press.

- ^ Robinson, D.R.; Goforth, D.J. (May 5, 2004). "Alibi games: the Asymmetric Prisoner' s Dilemmas" (PDF). Meetings of the Canadian Economics Association, Toronto, June 4–6, 2004. Cite journal requires

|journal=(help) - ^ Beckenkamp, Martin; Hennig-Schmidt, Heike; Maier-Rigaud, Frank P. (March 4, 2007). "Cooperation in Symmetric and Asymmetric Prisoner's Dilemma Games" (preprint link). Max Planck Institute for Research on Collective Goods. Cite journal requires

|journal=(help) - ^ Weber, M.; Kopelman, S.; Messick, D. (2004). "A conceptual Review of Decision Making in Social Dilemmas: Applying the Logic of Appropriateness". Personality and Social Psychology Review. 8 (3): 281–307. doi:10.1207/s15327957pspr0803_4. PMID 15454350. S2CID 1525372.

- ^ Kopelman, S (2009). "The effect of culture and power on cooperation in commons dilemmas: Implications for global resource management". Organizational Behavior and Human Decision Processes. 108: 153–163. doi:10.1016/j.obhdp.2008.06.004. hdl:2027.42/50454.

- ^ Allison, S. T.; Kerr, N.L. (1994). "Group correspondence biases and the provision of public goods". Journal of Personality and Social Psychology. 66 (4): 688–698. doi:10.1037/0022-3514.66.4.688.

- ^ Baumol, William (1952). Welfare Economics and the Theory of the State. Cambridge, MA: Harvard University Press.

- ^ Karau, Steven J.; Williams, Kipling D. (1993). "Social loafing: A meta-analytic review and theoretical integration". Journal of Personality and Social Psychology. 65 (4): 681–706. doi:10.1037/0022-3514.65.4.681. The reduction in motivation and effort when individuals work collectively compared with when they work individually or coactively

- ^ Jump up to: a b c "Public Goods: The Concise Encyclopedia of Economics | Library of Economics and Liberty". www.econlib.org. Retrieved 2018-04-18.

- ^ Jump up to: a b Banerjee, Abhijit (September 2006). "Public Action for Public Goods".

- ^ Schroeder, D. A. (1995). An introduction to social dilemmas. In D.A. Schroeder (Ed.), Social dilemmas: Perspectives on individuals and groups (pp. 1–14).

- ^ Jump up to: a b Kenneth T. Frank; Brian Petrie; Jae S. Choi; William C. Leggett (2005). "Trophic Cascades in a Formerly Cod-Dominated Ecosystem". Science. 308 (5728): 1621–1623. Bibcode:2005Sci...308.1621F. doi:10.1126/science.1113075. PMID 15947186. S2CID 45088691.

- ^ Brechner, K. C. (1977). "An experimental analysis of social traps". Journal of Experimental Social Psychology. 13 (6): 552–564. doi:10.1016/0022-1031(77)90054-3.

- ^ W F Lloyd - Two Lectures on the Checks to Population (1833)

- ^ Maaravi, Yossi; Levy, Aharon; Gur, Tamar; Confino, Dan; Segal, Sandra (2021-02-11). ""The Tragedy of the Commons": How Individualism and Collectivism Affected the Spread of the COVID-19 Pandemic". Frontiers in Public Health. 9: 627559. doi:10.3389/fpubh.2021.627559. ISSN 2296-2565. PMC 7905028. PMID 33643992.

- ^ Platt, J (1973). "Social traps". American Psychologist. 28 (8): 641–651. doi:10.1037/h0035723.

- ^ Wallace, M.D. (1979). Arms races and escalations: some new evidence. In J.D. Singer (Ed.), Explaining war: Selected papers from the correlates of war project (pp. 24-252). Beverly Hills, CA: Sage.

- ^ Tetlock, P. E. (1983). "Policy-makers' images of international conflict". Journal of Social Issues. 39: 67–86. doi:10.1111/j.1540-4560.1983.tb00130.x.

- ^ "Voting matters even if your vote doesn't: A collective action dilemma". Princeton University Press Blog. 2012-11-05. Retrieved 2018-04-18.

- ^ Kanazawa, Satoshi (2000). "A New Solution to the Collective Action Problem: The Paradox of Voter Turnout". American Sociological Review. 65 (3): 433–442. doi:10.2307/2657465. JSTOR 2657465.

- ^ Jump up to: a b Duit, Andreas (2011-12-01). "Patterns of Environmental Collective Action: Some Cross-National Findings". Political Studies. 59 (4): 900–920. doi:10.1111/j.1467-9248.2010.00858.x. ISSN 1467-9248. S2CID 142706143.

- ^ "A Majority Says that Government Regulation of Business Does More Harm than Good". Pew Research Center. 2012-03-07. Retrieved 2018-04-18.

- ^ Van Vugt, M.; Meertens, R. & Van Lange, P. (1995). "Car versus public transportation? The role of social value orientations in a real-life social dilemma" (PDF). Journal of Applied Social Psychology. 25 (3): 358–378. CiteSeerX 10.1.1.612.8158. doi:10.1111/j.1559-1816.1995.tb01594.x. Archived from the original (PDF) on 2011-07-15.

- ^ Orbell, John M.; Dawes, Robyn M. & van de Kragt, Alphons J. C. (1988). "Explaining discussion-induced cooperation". Journal of Personality and Social Psychology. 54 (5): 811–819. doi:10.1037/0022-3514.54.5.811.

- ^ Razmerita, Liana; Kirchner, Kathrin; Nielsen, Pia (2016). "What factors influence knowledge sharing in organizations? A social dilemma perspective of social media communication" (PDF). Journal of Knowledge Management. 20 (6): 1225–1246. doi:10.1108/JKM-03-2016-0112. hdl:10398/e7995d52-ccc3-4156-b131-41e5908d0e63.

- ^ Nowak, M. A.; Sigmund, K. (1992). "Tit for tat in heterogeneous populations" (PDF). Nature. 355 (6357): 250–253. Bibcode:1992Natur.355..250N. doi:10.1038/355250a0. S2CID 4281385. Archived from the original (PDF) on 2011-06-16.

- ^ Ostrom, Elinor (1990). Governing the Commons:The Evolution of Institutions for Collective Action. Cambridge University Press.

- ^ Poteete, Janssen, and Ostrom (2010). Working Together: Collective Action, the Commons, and Multiple Methods in Practice. Princeton University Press.CS1 maint: multiple names: authors list (link)

- ^ Yamagishi, T. (1986). "The Provision of a Sanctioning System as a Public Good". Journal of Personality and Social Psychology. 51 (1): 110–116. doi:10.1037/0022-3514.51.1.110.

- ^ Mulder, L.B.; Van Dijk, E.; De Cremer, D.; Wilke, H.A.M. (2006). "Undermining trust and cooperation: The paradox of sanctioning systems in social dilemmas". Journal of Experimental Social Psychology. 42 (2): 147–162. doi:10.1016/j.jesp.2005.03.002.

- ^ Ostrom, Elinor (1990). Governing the Commons.

- ^ Poteete; et al. (2010). Working Together.

- ^ Van Vugt, M. & De Cremer, D. (1999). "Leadership in social dilemmas: The effects of group identification on collective actions to provide public goods" (PDF). Journal of Personality and Social Psychology. 76 (4): 587–599. doi:10.1037/0022-3514.76.4.587.

- ^ Jump up to: a b Ostrom, Elinor (24 July 2009). "A General Framework for Analyzing Sustainability of Social-Ecological Systems". Science. 325 (5939): 419–422. Bibcode:2009Sci...325..419O. doi:10.1126/science.1172133. PMID 19628857. S2CID 39710673.

- ^ see for example Gunnthorsdottir, A. and Rapoport, A. (2006). "Embedding social dilemmas in intergroup competition reduces free-riding". Organizational Behavior and Human Decision Processes. 101 (2): 184–199, also contains a survey of the relevant literature. doi:10.1016/j.obhdp.2005.08.005.CS1 maint: multiple names: authors list (link)

- ^ Ostrom, Elinor (25 September 2007). "A diagnostic approach for going beyond panaceas". Proceedings of the National Academy of Sciences. 104 (39): 15181–15187. doi:10.1073/pnas.0702288104. PMC 2000497. PMID 17881578.

- ^ Poteete, Janssen, and Ostrom (2010). Working Together: Collective Action, the Commons, and Multiple Methods in Practice. Princeton University Press. p. 228.CS1 maint: multiple names: authors list (link)

Further reading[]

- Axelrod, R. A. (1984). The evolution of cooperation. New York: Basic Books. ISBN 978-0-465-02122-2.

- Batson, D. & Ahmad, N. (2008). "Altruism: Myth or Reality?". In-Mind Magazine. 6. Archived from the original on 2008-05-17.

- Dawes, R. M. (1980). "Social dilemmas". Annual Review of Psychology. 31: 169–193. doi:10.1146/annurev.ps.31.020180.001125.

- ——— & Messick, M. (2000). "Social Dilemmas". International Journal of Psychology. 35 (2): 111–116. doi:10.1080/002075900399402.

- Kollock, P. (1998). "Social dilemmas: Anatomy of cooperation". Annual Review of Sociology. 24: 183–214. doi:10.1146/annurev.soc.24.1.183. JSTOR 223479.

- Komorita, S. & Parks, C. (1994). Social Dilemmas. Boulder, CO: Westview Press. ISBN 978-0-8133-3003-7.

- Kopelman, S., Weber, M, & Messick, D. (2002). Factors Influencing Cooperation in Commons Dilemmas: A Review of Experimental Psychological Research. In E. Ostrom et al., (Eds.) The Drama of the Commons. Washington DC: National Academy Press. Ch. 4., 113–156

- Kopelman, S. (2009). "The effect of culture and power on cooperation in commons dilemmas: Implications for global resource management" (PDF). Organizational Behavior and Human Decision Processes. 108: 153–163. doi:10.1016/j.obhdp.2008.06.004. hdl:2027.42/50454.

- Messick, D. M. & Brewer, M. B. (1983). "Solving social dilemmas: A review". In Wheeler, L. & Shaver, P. (eds.). Review of personality and social psychology. 4. Beverly Hills, CA: Sage. pp. 11–44.

- Nowak, M. A.; Sigmund, K. (1992). "Tit for tat in heterogeneous populations" (PDF). Nature. 355 (6357): 250–253. Bibcode:1992Natur.355..250N. doi:10.1038/355250a0. S2CID 4281385. Archived from the original (PDF) on 2011-06-16.

- Palfrey, Thomas R. & Rosenthal, Howard (1988). "Private Incentives in Social Dilemmas: The Effects of Incomplete Information and Altruism". Journal of Public Economics. 35 (3): 309–332. doi:10.1016/0047-2727(88)90035-7.

- Ridley, M. (1997). Origins of virtue. London: Penguin Classics. ISBN 978-0-670-87449-1.

- Rothstein, B. (2003). Social Traps and the Problem of Trust. Cambridge: Cambridge University Press. ISBN 978-0521612821.

- Schneider, S. K. & Northcraft, G. B. (1999). "Three social dilemmas of workforce diversity in organizations: A social identity perspective". Human Relations. 52 (11): 1445–1468. doi:10.1177/001872679905201105. S2CID 145217407.

- Van Lange, P. A. M.; Otten, W.; De Bruin, E. M. N. & Joireman, J. A. (1997). "Development of prosocial, individualistic, and competitive orientations: Theory and preliminary evidence" (PDF). Journal of Personality and Social Psychology. 73 (4): 733–746. doi:10.1037/0022-3514.73.4.733. hdl:1871/17714. PMID 9325591.

- Van Vugt, M. & De Cremer, D. (1999). "Leadership in social dilemmas: The effects of group identification on collective actions to provide public goods" (PDF). Journal of Personality and Social Psychology. 76 (4): 587–599. doi:10.1037/0022-3514.76.4.587.

- Weber, M.; Kopelman, S. & Messick, D. M. (2004). "A conceptual review of social dilemmas: Applying a logic of appropriateness". Personality and Social Psychology Review. 8 (3): 281–307. doi:10.1207/s15327957pspr0803_4. PMID 15454350. S2CID 1525372.

- Yamagishi, T. (1986). "The structural goal/expectation theory of cooperation in social dilemmas". In Lawler, E. (ed.). Advances in group processes. 3. Greenwich, CT: JAI Press. pp. 51–87. ISBN 978-0-89232-572-6.

External links[]

- Community building

- Dilemmas

- Political science terminology

- Public choice theory

- Social sciences terminology

- Sociological terminology

- Group processes

- Moral psychology

- Social psychology

- Social responsibility