Count sketch

| Part of a series on |

| Machine learning and data mining |

|---|

|

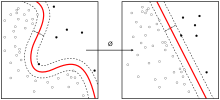

Count sketch is a type of dimensionality reduction that is particularly efficient in statistics, machine learning and algorithms.[1][2] It was invented by Moses Charikar, Kevin Chen and Martin Farach-Colton[3] in an effort to speed up the by Alon, Matias and Szegedy for approximating the frequency moments of streams.[4]

The sketch is nearly identical to the Feature hashing algorithm by John Moody,[5] but differs in its use of hash functions with low dependence, which makes it more practical. In order to still have a high probability of success, the is used to aggregate multiple count sketches, rather than the mean.

These properties allow use for explicit kernel methods, bilinear pooling in neural networks and is a cornerstone in many numerical linear algebra algorithms.[6]

Mathematical definition[]

1. For constants and (to be defined later) independently choose random hash functions and such that and . It is necessary that the hash families from which and are chosen be pairwise independent.

2. For each item in the stream, add to the th bucket of the th hash.

At the end of this process, one has sums where

To estimate the count of s one computes the following value:

Relation to Tensor sketch[]

The count sketch projection of the outer product of two vectors is equivalent to the convolution of two component count sketches.

The count sketch computes a vector convolution

, where and are independent count sketch matrices.

Pham and Pagh[7] show that this equals – a count sketch of the outer product of vectors, where denotes Kronecker product.

The fast Fourier transform can be used to do fast convolution of count sketches. By using the face-splitting product[8][9][10] such structures can be computed much faster than normal matrices.

See also[]

- Count–min sketch

- Tensorsketch

References[]

- ^ Faisal M. Algashaam; Kien Nguyen; Mohamed Alkanhal; Vinod Chandran; Wageeh Boles. "Multispectral Periocular Classification WithMultimodal Compact Multi-Linear Pooling" [1]. IEEE Access, Vol. 5. 2017.

- ^ Ahle, Thomas; Knudsen, Jakob (2019-09-03). "Almost Optimal Tensor Sketch". ResearchGate. Retrieved 2020-07-11.

- ^ Charikar, Moses, Kevin Chen, and Martin Farach-Colton. "Finding frequent items in data streams." International Colloquium on Automata, Languages, and Programming. Springer, Berlin, Heidelberg, 2002.

- ^ Alon, Noga, Yossi Matias, and Mario Szegedy. "The space complexity of approximating the frequency moments." Journal of Computer and system sciences 58.1 (1999): 137-147.

- ^ Moody, John. "Fast learning in multi-resolution hierarchies." Advances in neural information processing systems. 1989.

- ^ Woodruff, David P. "Sketching as a Tool for Numerical Linear Algebra." Theoretical Computer Science 10.1-2 (2014): 1–157.

- ^ Ninh, Pham; Pagh, Rasmus (2013). Fast and scalable polynomial kernels via explicit feature maps. SIGKDD international conference on Knowledge discovery and data mining. Association for Computing Machinery. doi:10.1145/2487575.2487591.

- ^ Slyusar, V. I. (1998). "End products in matrices in radar applications" (PDF). Radioelectronics and Communications Systems. 41 (3): 50–53.

- ^ Slyusar, V. I. (1997-05-20). "Analytical model of the digital antenna array on a basis of face-splitting matrix products" (PDF). Proc. ICATT-97, Kyiv: 108–109.

- ^ Slyusar, V. I. (March 13, 1998). "A Family of Face Products of Matrices and its Properties" (PDF). Cybernetics and Systems Analysis C/C of Kibernetika I Sistemnyi Analiz.- 1999. 35 (3): 379–384. doi:10.1007/BF02733426.

Further reading[]

- Faisal M. Algashaam; Kien Nguyen; Mohamed Alkanhal; Vinod Chandran; Wageeh Boles. "Multispectral Periocular Classification WithMultimodal Compact Multi-Linear Pooling" [1]. IEEE Access, Vol. 5. 2017.

- Ahle, Thomas; Knudsen, Jakob (2019-09-03). "Almost Optimal Tensor Sketch". ResearchGate. Retrieved 2020-07-11.

- Dimension reduction

- Machine learning

![{\displaystyle h_{i}:[n]\to [w]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6b4b940658be86ca48754ebdebd48542e26c99e5)

![{\displaystyle s_{i}:[n]\to \{\pm 1\}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d6f8f551947983fd6ed59bb8a67c37e5d86aa86a)