Word embedding

| Part of a series on |

| Machine learning and data mining |

|---|

|

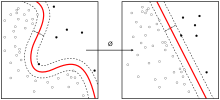

In natural language processing (NLP), word embedding is a term used for the representation of words for text analysis, typically in the form of a real-valued vector that encodes the meaning of the word such that the words that are closer in the vector space are expected to be similar in meaning.[1] Word embeddings can be obtained using a set of language modeling and feature learning techniques where words or phrases from the vocabulary are mapped to vectors of real numbers. Conceptually it involves the mathematical embedding from space with many dimensions per word to a continuous vector space with a much lower dimension.

Methods to generate this mapping include neural networks,[2] dimensionality reduction on the word co-occurrence matrix,[3][4][5] probabilistic models,[6] explainable knowledge base method,[7] and explicit representation in terms of the context in which words appear.[8]

Word and phrase embeddings, when used as the underlying input representation, have been shown to boost the performance in NLP tasks such as syntactic parsing[9] and sentiment analysis.[10]

Development and history of the approach[]

In linguistics, word embeddings were discussed in the research area of distributional semantics. It aims to quantify and categorize semantic similarities between linguistic items based on their distributional properties in large samples of language data. The underlying idea that "a word is characterized by the company it keeps" was popularized by John Rupert Firth.[11]

The notion of a semantic space with lexical items (words or multi-word terms) represented as vectors or embeddings is based on the computational challenges of capturing distributional characteristics and using them for practical application to measure similarity between words, phrases, or entire documents. The first generation of semantic space models is the vector space model for information retrieval.[12][13][14] Such vector space models for words and their distributional data implemented in their simplest form results in a very sparse vector space of high dimensionality (cf. Curse of dimensionality). Reducing the number of dimensions using linear algebraic methods such as singular value decomposition then led to the introduction of latent semantic analysis in the late 1980s and the Random indexing approach for collecting word cooccurrence contexts.[15][16][17][18][19] In 2000 Bengio et al. provided in a series of papers the "Neural probabilistic language models" to reduce the high dimensionality of words representations in contexts by "learning a distributed representation for words".[20][21] Word embeddings come in two different styles, one in which words are expressed as vectors of co-occurring words, and another in which words are expressed as vectors of linguistic contexts in which the words occur; these different styles are studied in (Lavelli et al., 2004).[22] Roweis and Saul published in Science how to use "locally linear embedding" (LLE) to discover representations of high dimensional data structures.[23] Most new word embedding techniques after about 2005 rely on a neural network architecture instead of more probabilistic and algebraic models, since some foundational work by Yoshua Bengio and colleagues.[24][25]

The approach has been adopted by many research groups after advances around year 2010 had been made on theoretical work on the quality of vectors and the training speed of the model and hardware advances allowed for a broader parameter space to be explored profitably. In 2013, a team at Google led by Tomas Mikolov created word2vec, a word embedding toolkit that can train vector space models faster than the previous approaches. The word2vec approach has been widely used in experimentation and was instrumental in raising interest for word embeddings as a technology, moving the research strand out of specialised research into broader experimentation and eventually paving the way for practical application.[26]

Limitations[]

Traditionally, one of the main limitations of word embeddings (word vector space models in general) is that words with multiple meanings are conflated into a single representation (a single vector in the semantic space). In other words, polysemy and homonymy are not handled properly. For example, in the sentence "The club I tried yesterday was great!", it is not clear if the term club is related to the word sense of a club sandwich, baseball club, clubhouse, golf club, or any other sense that club might have. The necessity to accommodate multiple meanings per word in different vectors (multi-sense embeddings) is the motivation for several contributions in NLP to split single-sense embeddings into multi-sense ones.[27][28]

Most approaches that produce multi-sense embeddings can be divided into two main categories for their word sense representation, i.e., unsupervised and knowledge-based.[29] Based on word2vec skip-gram, Multi-Sense Skip-Gram (MSSG)[30] performs word-sense discrimination and embedding simultaneously, improving its training time, while assuming a specific number of senses for each word. In the Non-Parametric Multi-Sense Skip-Gram (NP-MSSG) this number can vary depending on each word. Combining the prior knowledge of lexical databases (e.g., WordNet, ConceptNet, BabelNet), word embeddings and word sense disambiguation, Most Suitable Sense Annotation (MSSA)[31] labels word-senses through an unsupervised and knowledge-based approach considering a word's context in a pre-defined sliding window. Once the words are disambiguated, they can be used in a standard word embeddings technique, so multi-sense embeddings are produced. MSSA architecture allows the disambiguation and annotation process to be performed recurrently in a self-improving manner.

The use of multi-sense embeddings is known to improve performance in several NLP tasks, such as part-of-speech tagging, semantic relation identification, semantic relatedness, named entity recognition and sentiment analysis.[32][33]

Recently, contextually-meaningful embeddings such as ELMo and BERT have been developed. These embeddings use a word's context to disambiguate polysemes. They do so using LSTM and Transformer neural network architectures.

For biological sequences: BioVectors[]

Word embeddings for n-grams in biological sequences (e.g. DNA, RNA, and Proteins) for bioinformatics applications have been proposed by Asgari and Mofrad.[34] Named bio-vectors (BioVec) to refer to biological sequences in general with protein-vectors (ProtVec) for proteins (amino-acid sequences) and gene-vectors (GeneVec) for gene sequences, this representation can be widely used in applications of deep learning in proteomics and genomics. The results presented by Asgari and Mofrad[34] suggest that BioVectors can characterize biological sequences in terms of biochemical and biophysical interpretations of the underlying patterns.

Thought vectors[]

Thought vectors are an extension of word embeddings to entire sentences or even documents. Some researchers hope that these can improve the quality of machine translation.[35]

Software[]

Software for training and using word embeddings includes Tomas Mikolov's Word2vec, Stanford University's GloVe,[36] GN-GloVe,[37] Flair embeddings,[32] AllenNLP's ELMo,[38] BERT,[39] fastText, Gensim,[40] Indra[41] and Deeplearning4j. Principal Component Analysis (PCA) and T-Distributed Stochastic Neighbour Embedding (t-SNE) are both used to reduce the dimensionality of word vector spaces and visualize word embeddings and clusters.[42]

Examples of application[]

For instance, the fastText is also used to calculate word embeddings for text corpora in Sketch Engine that are available online.[43]

See also[]

References[]

- ^ Jurafsky, Daniel; H. James, Martin (2000). Speech and language processing : an introduction to natural language processing, computational linguistics, and speech recognition. Upper Saddle River, N.J.: Prentice Hall. ISBN 978-0-13-095069-7.

- ^ Mikolov, Tomas; Sutskever, Ilya; Chen, Kai; Corrado, Greg; Dean, Jeffrey (2013). "Distributed Representations of Words and Phrases and their Compositionality". arXiv:1310.4546 [cs.CL].

- ^ Lebret, Rémi; Collobert, Ronan (2013). "Word Emdeddings through Hellinger PCA". Conference of the European Chapter of the Association for Computational Linguistics (EACL). 2014. arXiv:1312.5542.

- ^ Levy, Omer; Goldberg, Yoav (2014). Neural Word Embedding as Implicit Matrix Factorization (PDF). NIPS.

- ^ Li, Yitan; Xu, Linli (2015). Word Embedding Revisited: A New Representation Learning and Explicit Matrix Factorization Perspective (PDF). Int'l J. Conf. on Artificial Intelligence (IJCAI).

- ^ Globerson, Amir (2007). "Euclidean Embedding of Co-occurrence Data" (PDF). Journal of Machine Learning Research.

- ^ Qureshi, M. Atif; Greene, Derek (2018-06-04). "EVE: explainable vector based embedding technique using Wikipedia". Journal of Intelligent Information Systems. 53: 137–165. arXiv:1702.06891. doi:10.1007/s10844-018-0511-x. ISSN 0925-9902. S2CID 10656055.

- ^ Levy, Omer; Goldberg, Yoav (2014). Linguistic Regularities in Sparse and Explicit Word Representations (PDF). CoNLL. pp. 171–180.

- ^ Socher, Richard; Bauer, John; Manning, Christopher; Ng, Andrew (2013). Parsing with compositional vector grammars (PDF). Proc. ACL Conf.

- ^ Socher, Richard; Perelygin, Alex; Wu, Jean; Chuang, Jason; Manning, Chris; Ng, Andrew; Potts, Chris (2013). Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank (PDF). EMNLP.

- ^ Firth, J.R. (1957). "A synopsis of linguistic theory 1930–1955". Studies in Linguistic Analysis: 1–32. Reprinted in F.R. Palmer, ed. (1968). Selected Papers of J.R. Firth 1952–1959. London: Longman.

- ^ Salton, Gerard (1962). "Some experiments in the generation of word and document associations". Proceeding AFIPS '62 (Fall) Proceedings of the December 4–6, 1962, Fall Joint Computer Conference. AFIPS '62 (Fall): 234–250. doi:10.1145/1461518.1461544. ISBN 9781450378796. S2CID 9937095. Retrieved 18 October 2020.

- ^ Salton, Gerard; Wong, A; Yang, C S (1975). "A Vector Space Model for Automatic Indexing". Communications of the Association for Computing Machinery (CACM). 18 (11): 613–620. doi:10.1145/361219.361220. hdl:1813/6057. S2CID 6473756.

- ^ Dubin, David (2004). "The most influential paper Gerard Salton never wrote". Retrieved 18 October 2020.

- ^ Sahlgren, Magnus. "A brief history of word embeddings".

- ^ Kanerva, Pentti, Kristoferson, Jan and Holst, Anders (2000): Random Indexing of Text Samples for Latent Semantic Analysis, Proceedings of the 22nd Annual Conference of the Cognitive Science Society, p. 1036. Mahwah, New Jersey: Erlbaum, 2000.

- ^ Karlgren, Jussi; Sahlgren, Magnus (2001). Uesaka, Yoshinori; Kanerva, Pentti; Asoh, Hideki (eds.). "From words to understanding". Foundations of Real-World Intelligence. CSLI Publications: 294–308.

- ^ Sahlgren, Magnus (2005) An Introduction to Random Indexing, Proceedings of the Methods and Applications of Semantic Indexing Workshop at the 7th International Conference on Terminology and Knowledge Engineering, TKE 2005, August 16, Copenhagen, Denmark

- ^ Sahlgren, Magnus, Holst, Anders and Pentti Kanerva (2008) Permutations as a Means to Encode Order in Word Space, In Proceedings of the 30th Annual Conference of the Cognitive Science Society: 1300–1305.

- ^ Bengio, Yoshua; Ducharme, Réjean; Vincent, Pascal; Jauvin, Christian (2003). "A Neural Probabilistic Language Model" (PDF). Journal of Machine Learning Research. 3: 1137–1155.

- ^ Bengio, Yoshua; Schwenk, Holger; Senécal, Jean-Sébastien; Morin, Fréderic; Gauvain, Jean-Luc (2006). A Neural Probabilistic Language Model. Studies in Fuzziness and Soft Computing. 194. pp. 137–186. doi:10.1007/3-540-33486-6_6. ISBN 978-3-540-30609-2.

- ^ Lavelli, Alberto; Sebastiani, Fabrizio; Zanoli, Roberto (2004). Distributional term representations: an experimental comparison. 13th ACM International Conference on Information and Knowledge Management. pp. 615–624. doi:10.1145/1031171.1031284.

- ^ Roweis, Sam T.; Saul, Lawrence K. (2000). "Nonlinear Dimensionality Reduction by Locally Linear Embedding". Science. 290 (5500): 2323–6. Bibcode:2000Sci...290.2323R. CiteSeerX 10.1.1.111.3313. doi:10.1126/science.290.5500.2323. PMID 11125150.

- ^ Morin, Fredric; Bengio, Yoshua (2005). "Hierarchical probabilistic neural network language model" (PDF). In Cowell, Robert G.; Ghahramani, Zoubin (eds.). Proceedings of the Tenth International Workshop on Artificial Intelligence and Statistics. Proceedings of Machine Learning Research. R5. pp. 246–252.

- ^ Mnih, Andriy; Hinton, Geoffrey (2009). "A Scalable Hierarchical Distributed Language Model". Advances in Neural Information Processing Systems 21 (NIPS 2008). Curran Associates, Inc. 21: 1081–1088.

- ^ "word2vec". Google Code Archive. Retrieved 23 July 2021.

- ^ Reisinger, Joseph; Mooney, Raymond J. (2010). Multi-Prototype Vector-Space Models of Word Meaning. Human Language Technologies: The 2010 Annual Conference of the North American Chapter of the Association for Computational Linguistics. Los Angeles, California: Association for Computational Linguistics. pp. 109–117. ISBN 978-1-932432-65-7. Retrieved October 25, 2019.

- ^ Huang, Eric. (2012). Improving word representations via global context and multiple word prototypes. OCLC 857900050.

- ^ Camacho-Collados, Jose; Pilehvar, Mohammad Taher (2018). "From Word to Sense Embeddings: A Survey on Vector Representations of Meaning". arXiv:1805.04032 [cs.CL].

- ^ Neelakantan, Arvind; Shankar, Jeevan; Passos, Alexandre; McCallum, Andrew (2014). "Efficient Non-parametric Estimation of Multiple Embeddings per Word in Vector Space". Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP). Stroudsburg, PA, USA: Association for Computational Linguistics: 1059–1069. arXiv:1504.06654. doi:10.3115/v1/d14-1113. S2CID 15251438.

- ^ Ruas, Terry; Grosky, William; Aizawa, Akiko (2019-12-01). "Multi-sense embeddings through a word sense disambiguation process". Expert Systems with Applications. 136: 288–303. arXiv:2101.08700. doi:10.1016/j.eswa.2019.06.026. hdl:2027.42/145475. ISSN 0957-4174. S2CID 52225306.

- ^ a b Akbik, Alan; Blythe, Duncan; Vollgraf, Roland (2018). "Contextual String Embeddings for Sequence Labeling". Proceedings of the 27th International Conference on Computational Linguistics. Santa Fe, New Mexico, USA: Association for Computational Linguistics: 1638–1649.

- ^ Li, Jiwei; Jurafsky, Dan (2015). "Do Multi-Sense Embeddings Improve Natural Language Understanding?". Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing. Stroudsburg, PA, USA: Association for Computational Linguistics: 1722–1732. arXiv:1506.01070. doi:10.18653/v1/d15-1200. S2CID 6222768.

- ^ a b Asgari, Ehsaneddin; Mofrad, Mohammad R.K. (2015). "Continuous Distributed Representation of Biological Sequences for Deep Proteomics and Genomics". PLOS ONE. 10 (11): e0141287. arXiv:1503.05140. Bibcode:2015PLoSO..1041287A. doi:10.1371/journal.pone.0141287. PMC 4640716. PMID 26555596.

- ^ Kiros, Ryan; Zhu, Yukun; Salakhutdinov, Ruslan; Zemel, Richard S.; Torralba, Antonio; Urtasun, Raquel; Fidler, Sanja (2015). "skip-thought vectors". arXiv:1506.06726 [cs.CL].

- ^ "GloVe".

- ^ Zhao, Jieyu; et al. (2018) (2018). "Learning Gender-Neutral Word Embeddings". arXiv:1809.01496 [cs.CL].

- ^ "Elmo".

- ^ Pires, Telmo; Schlinger, Eva; Garrette, Dan (2019-06-04). "How multilingual is Multilingual BERT?". arXiv:1906.01502 [cs.CL].

- ^ "Gensim".

- ^ "Indra". GitHub. 2018-10-25.

- ^ Ghassemi, Mohammad; Mark, Roger; Nemati, Shamim (2015). "A Visualization of Evolving Clinical Sentiment Using Vector Representations of Clinical Notes" (PDF). Computing in Cardiology.

- ^ "Embedding Viewer". Embedding Viewer. Lexical Computing. Retrieved 7 Feb 2018.

- Language modeling

- Artificial neural networks

- Natural language processing

- Computational linguistics

- Semantic relations